Ant environment recreation in Python

This work focused on recreting real world ant testing environments within simulation, so we could generate what the ant would see. This is based on the extensive work of my colleague Oluwaseyi Oladipupo Jesusanmiwho developed all the scanning pipeline, and worked with the ants to gather the trajectory data. Ants are incredible navigators. With very limited brain power, they can explore unfamiliar environments, learn routes, and reliably find their way between their nest and food sources. This project looks at how they do that, and how we can recreate similar behaviour in artificial systems. My part of this project was developing a grid based simulation from the ant dataset.Dataset published here

Grid Environment

To study this, we built a digital version of a real ant environment. Using 3D scanning and simulation tools, we recreated a laboratory arena and captured what the world looks like from an ant's perspective. Instead of giving our models full access to the environment, we broke it down into a grid of visual snapshots, thousands of panoramic views taken from different positions and directions. This means our algorithms experience the world in a similar way to ants: locally, visually, and step-by-step. This grid-based setup lets us test how well different learning systems can move through space using only what they “see,” rather than relying on maps or global knowledge. This made it easier to render.The development of this simulation allows us to gather datasets from a first person perspective of each ant, leading towards the potential for development of LBMs.

Ant recreation

We also collected real data from ants exploring the arena. By tracking their movements, we could study how they behave when learning a new environment. One key observation is that ants don’t just move directly to a goal. Instead, they:- Explore widely

- Make frequent turns and oscillations

- Gradually refine their routes over time

These behaviours help them gather information and adapt if the environment changes. Capturing this balance between exploration and efficiency is one of the main challenges for artificial systems.

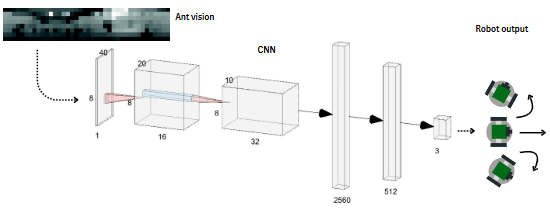

Preliminary work with GAs

As a first step, we tested genetic algorithms (GAs), a type of optimisation inspired by evolution.

In this setup:

- Each “agent” represents a possible navigation strategy developed from an evolving neural network

- Better strategies (those that get closer to the food target) are selected

- Over time, these strategies evolve using mutations of Gaussian noise for weights and biases

We found that genetic algorithms can learn effective routes through the environment. In many cases, they quickly discover direct paths to the target. However, their behaviour differs from real ants:

- They tend to take straighter, more efficient paths

- They show less exploration and fewer directional changes

- They resemble the average outcome of many ants, rather than the behaviour of a single individual

This suggests that while GAs are good at optimisation, they may miss some of the richer, exploratory behaviours seen in biology.